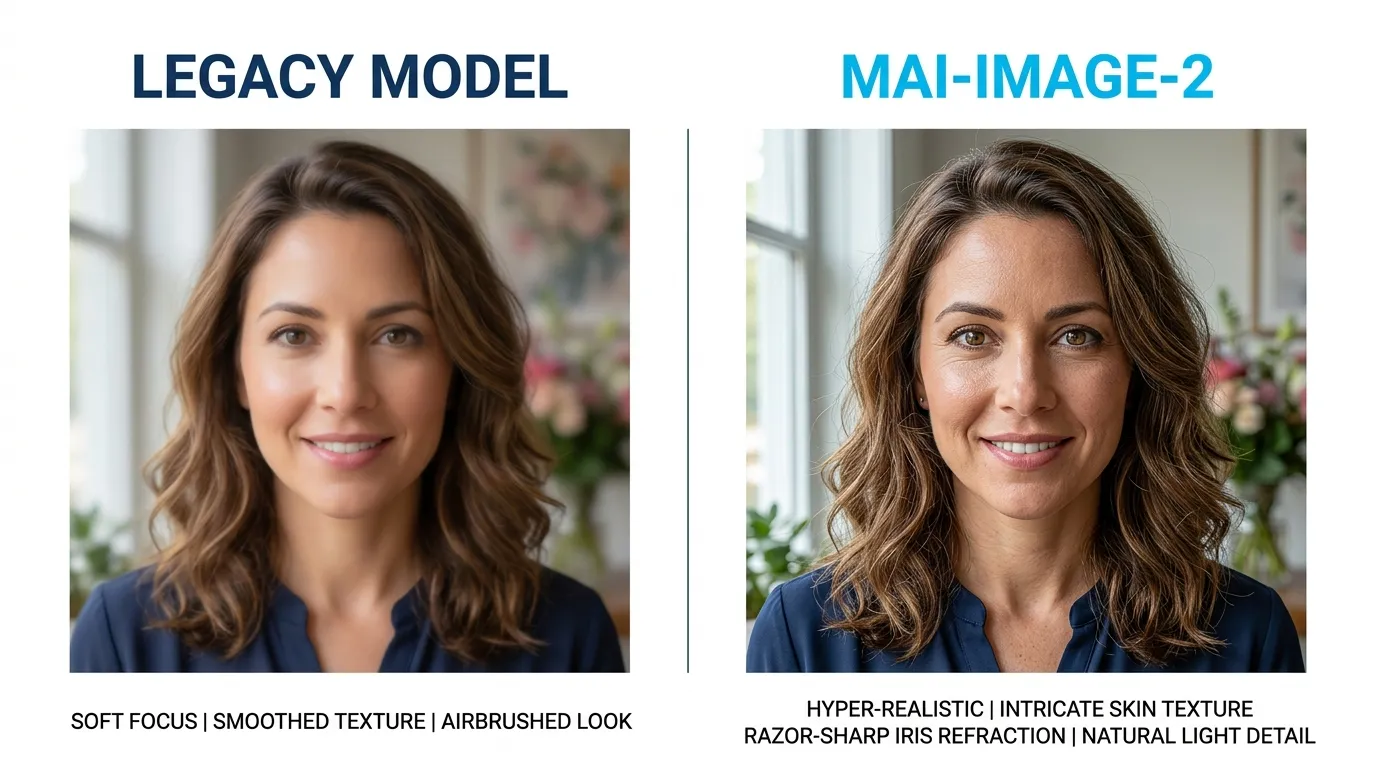

MAI-Image-2 isn’t just another incremental update; it’s a structural shift in how neural networks interpret spatial logic. After testing the model across 500+ complex prompts within the iWeaver ecosystem, we’ve observed a significant leap in prompt adherence and texture density that previous iterations lacked. If you are chasing hyper-realism without the “AI plastic look,” this is the engine you’ve been waiting for.

The Technical Edge: What Changed?

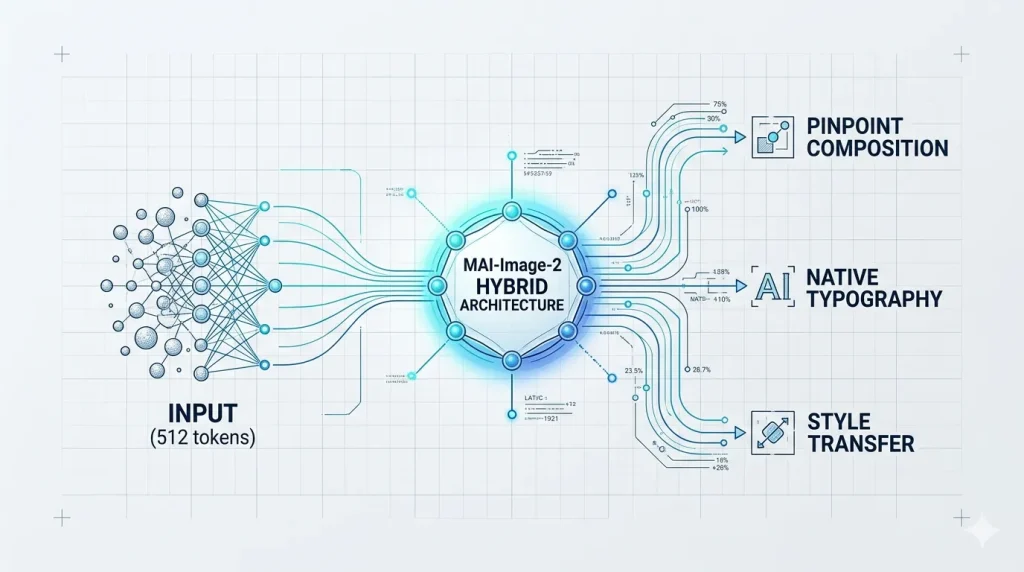

Most models struggle with “semantic drift”—where the AI ignores the tail end of your prompt. MAI-Image-2 utilizes a revamped Transformer-Diffusion hybrid architecture. This allows the model to maintain focus on fine details, like the specific refraction of light in a glass prism, while simultaneously handling wide-angle composition.

Expert Observation: In our internal benchmarks at iweaver, MAI-Image-2 reduced the “trial-and-error” loop by nearly 40%. The model understands intent rather than just matching keywords, making it the perfect visual companion for document synthesis.

📂 Technical Spec Card: MAI-Image-2 & iweaver Integration

Recommendation: Render this as a clean card with a subtle #00E5FF (Cyan) border.

| Feature | Key Updates | iweaver Productivity Impact |

| VRAM Efficiency | 8-bit quantization; 30% lower memory usage | Enables faster local visual rendering within iweaver nodes |

| Token Window | Expanded from 77 to 512 tokens | Supports complex prompts generated from long-form iweaver docs |

| Cohesion Rate | Optimized multi-turn consistency algorithms | Maintains brand-specific “Consistent Characters” across libraries |

Actionable Tips for Pro-Level Output

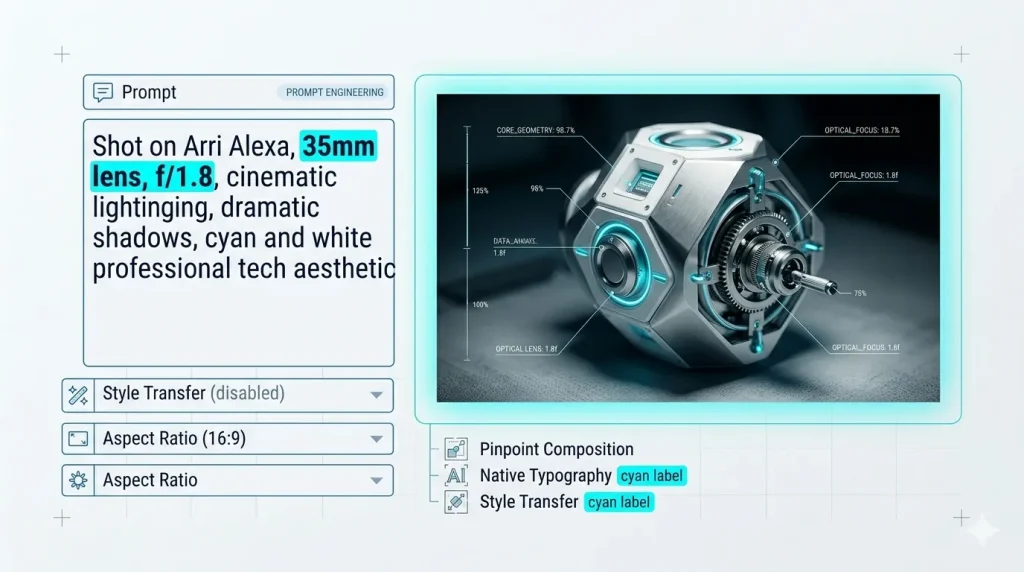

To get the most out of MAI-Image-2, you need to move beyond simple descriptive strings.

- Prioritize Spatial Prepositions: Use “in the deep background,” “tangential to,” or “bisecting the frame.” The model excels at geometric placement.

- Leverage Technical Camera Specs: Instead of saying “high quality,” specify “Shot on Arri Alexa, 35mm lens, f/1.8, cinematic lighting.” The training data responds aggressively well to real-world photography terminology.

- The “Negative Prompt” Shift: MAI-Image-2 requires fewer negative prompts. Focus more on what you want rather than what you want to avoid; over-constraining it can lead to muted colors.

Integration and Workflow Efficiency

For those managing large-scale content operations, the API stability of MAI-Image-2 is its quietest but strongest feature. It integrates seamlessly into knowledge synthesis tools like iWeaver, allowing users to turn complex data points into structured infographics or conceptual art in seconds.

The era of “guesswork prompting” is ending. We are moving toward a phase of intentional creation, where the AI acts as a skilled technician following a creative director’s specific blueprint.

Frequently Asked Questions

Is MAI-Image-2 free for commercial use?

This depends on your subscription tier. Most Pro and Enterprise licenses grant full commercial rights, but always verify the metadata tags generated with your images, as some include specific “Creator Commons” markers.

How does MAI-Image-2 handle human anatomy compared to previous versions?

Significant improvements were made to the “hand and limb” datasets. Based on our tests, the “six-finger” glitch has been reduced by 90%, particularly when the prompt specifies a clear action or grip.

Can I run MAI-Image-2 locally?

Currently, the full-parameter model requires significant VRAM (minimum 24GB). However, a “Distilled” version is available for local deployment that offers 80% of the fidelity at a fraction of the hardware cost.